Training Robots to Move Like Dogs

Training Robots to Move Like Dogs

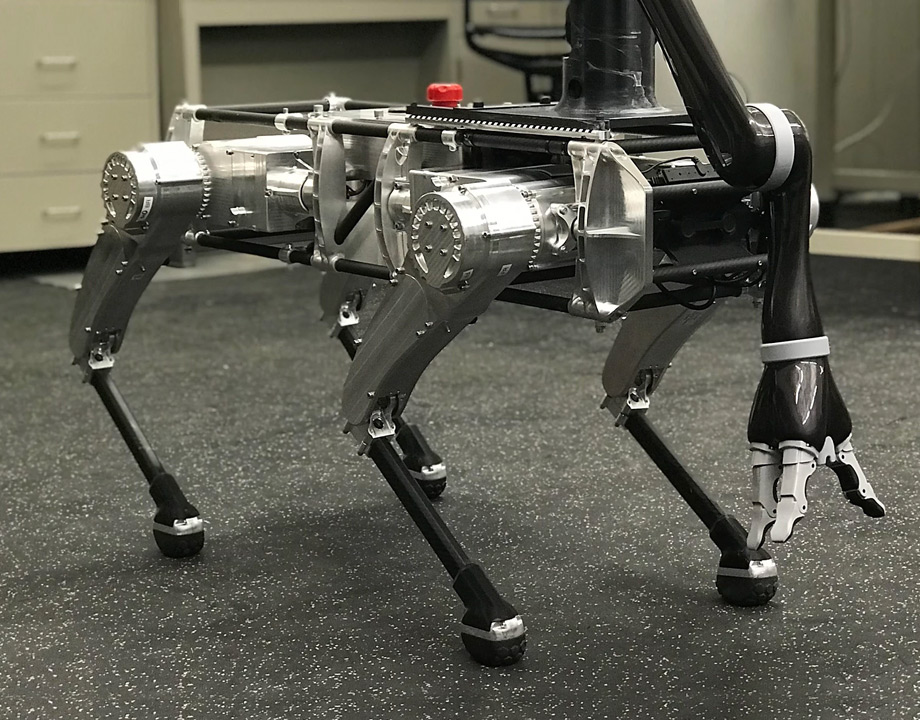

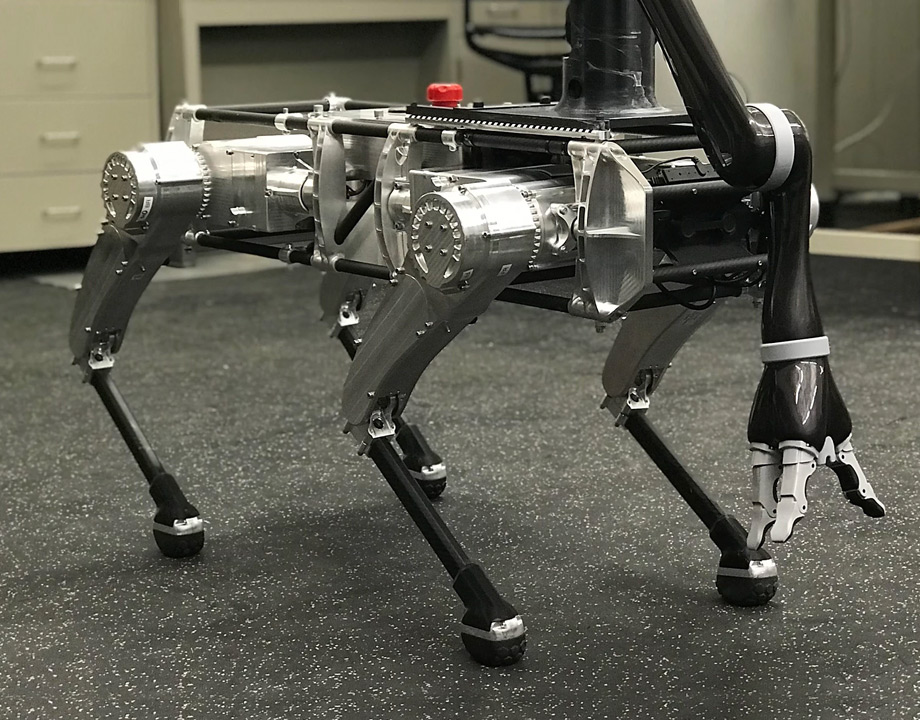

Control algorithms, sensors, and AI enhance robotic gait. Photo: Virginia Tech

Kaveh Hamed would like to see the lumbering and awkward gait, long associated with robots, recede into the distance like an old sci-fi movie. So he’s working on helping robots to move naturally and with agility over rocky terrain; he wants to enable them to walk with a natural human pace and to train four-legged robots to run and gambol like a happy dog in the park.

This past college football season, one of Hamed’s robodogs has been showing off its agility at the Virginia Tech Lane Stadium. Wearing a Hokies helmet and replacing the cheerleading team, the quadrupedal robot celebrated points scored by the home team—by doing push-ups in the end zone.

It's harder than one might think. Walking and doing push-ups may come naturally to most humans, but it doesn’t to most robots. The human and animal gait comes about through neurons and nerves and is hard to reproduce with algorithms and sensors.

Hamed is an assistant professor of mechanical engineering and head of the Hybrid Dynamic Systems and Robot Locomotion Lab at Virginia Tech. His research team is working to combine sensors, control algorithms, and artificial intelligence to improve the agility, stability, and dexterity of robotic dogs and to make the machines more responsive to their environment and to other robots.

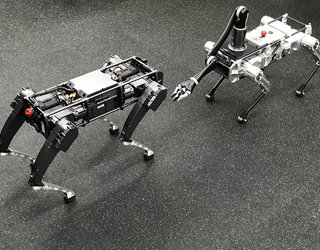

One day, quadrupedal robots could act as guide dogs for the visually impaired or venture into the sites of industrial accidents to gather vital information. But for now, he and his team are writing control algorithms for three robotic dogs from Ghost Robotics, a Philadelphia-based company that produces legged robots and unmanned ground vehicles.

Further Reading: Robotic Dog Is an Electronic Mutt

“If you look at dogs, they move in many ways, trotting, galloping, walking,” Hamed said. “So, we’re working on those gaits as well.”

To do this, the team takes into account the way actual animals move, he said.

Vertebrates instinctively call upon the oscillatory neurons on the spinal cord to balance themselves when upright and moving. The neurons communicate with each other to generate rhythmic motion that comes naturally. That’s why legged animals can close their eyes and walk—it just comes naturally, Hamed said.

But when navigating more complex environments, like climbing stairs, for example, animals call upon other neurons to interpret what they see and feel as they move forward.

Sensors and control algorithms can create similar effects—both the natural movement and the interpreting of the environment—for robotic dogs, Hamed said. His team uses two type of sensors for these robodogs to create the balance and motion control that takes place within the animal brain. Encoders are sensors that attach to joints to read their position in relation to each other. Inertial measurement sensors measure the robot body’s orientation to the ground.

The team also attaches cameras and Lidar, a laser sensing method to measure distances, to the robotic animals so they can map their environment with precision. The robots use machine vision to help them avoid obstacles or make contact with an object.

You May Also Like: Robots to the Rescue

Once the robots have read measurements of their own motion and their environment, the idea is to get them to act accordingly: On-board computers calculate the control actions for the robots to steer themselves from one point to another.

Still, Hamed said that though more legged robots are being built every year, the field has a long way to go before robots can match the agility of their two or four-legged sources of inspiration.

“We believe that the agility we see in animal locomotion—such as in a dog, a cheetah, or a mountain lion—cannot currently be closely pursued by robots, even state-of-the-art ones,” he said. “Robot technology is advancing rapidly, but there is still a fundamental gap between what we see in robots and what we see in their biological counterparts.”

So far, the researchers have simulated and begun testing several different gaits mirroring those of real animals. The robotic dogs have begun to amble, trot, and run at sharper angles with more agility, balance, and speed. The team is also exploring the integration of artificial intelligence into their control algorithms to improve the robots’ real-time decision-making in real-world environment.

Hamed finds the first tests on control algorithms with his robotic dogs promising, but the development of those algorithms will be an ongoing process.

Editors' Pick: New Moves for a Robot Made from Smaller Robots

“Are the algorithms we’re using actually bio-inspired?” he said. “Are they actually acting like dogs? We are trying to do the math. But it must be bio-inspired. We must look at animals and then correct our algorithms to see how they react to this scenario and how our control algorithms react.”

Jean Thilmany is an engineering writer based in St. Paul, Minn.

This past college football season, one of Hamed’s robodogs has been showing off its agility at the Virginia Tech Lane Stadium. Wearing a Hokies helmet and replacing the cheerleading team, the quadrupedal robot celebrated points scored by the home team—by doing push-ups in the end zone.

It's harder than one might think. Walking and doing push-ups may come naturally to most humans, but it doesn’t to most robots. The human and animal gait comes about through neurons and nerves and is hard to reproduce with algorithms and sensors.

Bio-inspired Locomotion

Hamed is an assistant professor of mechanical engineering and head of the Hybrid Dynamic Systems and Robot Locomotion Lab at Virginia Tech. His research team is working to combine sensors, control algorithms, and artificial intelligence to improve the agility, stability, and dexterity of robotic dogs and to make the machines more responsive to their environment and to other robots.

One day, quadrupedal robots could act as guide dogs for the visually impaired or venture into the sites of industrial accidents to gather vital information. But for now, he and his team are writing control algorithms for three robotic dogs from Ghost Robotics, a Philadelphia-based company that produces legged robots and unmanned ground vehicles.

Further Reading: Robotic Dog Is an Electronic Mutt

“If you look at dogs, they move in many ways, trotting, galloping, walking,” Hamed said. “So, we’re working on those gaits as well.”

To do this, the team takes into account the way actual animals move, he said.

Vertebrates instinctively call upon the oscillatory neurons on the spinal cord to balance themselves when upright and moving. The neurons communicate with each other to generate rhythmic motion that comes naturally. That’s why legged animals can close their eyes and walk—it just comes naturally, Hamed said.

But when navigating more complex environments, like climbing stairs, for example, animals call upon other neurons to interpret what they see and feel as they move forward.

Sensors and control algorithms can create similar effects—both the natural movement and the interpreting of the environment—for robotic dogs, Hamed said. His team uses two type of sensors for these robodogs to create the balance and motion control that takes place within the animal brain. Encoders are sensors that attach to joints to read their position in relation to each other. Inertial measurement sensors measure the robot body’s orientation to the ground.

The team also attaches cameras and Lidar, a laser sensing method to measure distances, to the robotic animals so they can map their environment with precision. The robots use machine vision to help them avoid obstacles or make contact with an object.

You May Also Like: Robots to the Rescue

Once the robots have read measurements of their own motion and their environment, the idea is to get them to act accordingly: On-board computers calculate the control actions for the robots to steer themselves from one point to another.

Small Steps for Robodogs

Still, Hamed said that though more legged robots are being built every year, the field has a long way to go before robots can match the agility of their two or four-legged sources of inspiration.

“We believe that the agility we see in animal locomotion—such as in a dog, a cheetah, or a mountain lion—cannot currently be closely pursued by robots, even state-of-the-art ones,” he said. “Robot technology is advancing rapidly, but there is still a fundamental gap between what we see in robots and what we see in their biological counterparts.”

So far, the researchers have simulated and begun testing several different gaits mirroring those of real animals. The robotic dogs have begun to amble, trot, and run at sharper angles with more agility, balance, and speed. The team is also exploring the integration of artificial intelligence into their control algorithms to improve the robots’ real-time decision-making in real-world environment.

Hamed finds the first tests on control algorithms with his robotic dogs promising, but the development of those algorithms will be an ongoing process.

Editors' Pick: New Moves for a Robot Made from Smaller Robots

“Are the algorithms we’re using actually bio-inspired?” he said. “Are they actually acting like dogs? We are trying to do the math. But it must be bio-inspired. We must look at animals and then correct our algorithms to see how they react to this scenario and how our control algorithms react.”

Jean Thilmany is an engineering writer based in St. Paul, Minn.