Augmented Reality Not Quite Real for Engineers

Augmented Reality Not Quite Real for Engineers

Augmented reality, the view of a physical, real-world setting augmented by computer-generated graphics or other information, is becoming more of a hot topic for users of portable electronic devices seeking directions, restaurant recommendations and reservations, or online shopping. Taking the technology to the next level, where engineers can display hidden items such as underground utilities using a small tablet or smartphone, for instance, is a lot trickier and a lot more difficult.

The problems center on accuracy. In the demanding fields of engineering or medicine, where research is also taking place, fractions of centimeters or inches make a big difference. "The potential for augmented reality is great but achieving it is extremely difficult," says Stephane Cote, a research director and fellow at Bentley Systems in Quebec. "For engineering, accuracy is extremely important. You're having an impact on people's lives. You need accurate data."

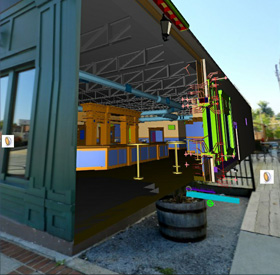

Integrating drawings and cutouts with real-world images provides context for an engineer. Image: Bentley Systems

Integrating drawings and cutouts with real-world images provides context for an engineer. Image: Bentley SystemsResearchers have been working with augmented reality for years, but the fast development of smart phones and tablets has been a potential gamechanger. It opened up an entire new, portable venue for AR. The iPhone already sports a number of apps, such asdetermining latitude and longitude.There also areothers that are available for other smart phones. Need to locate a restaurant? Plug in the name, and a streetscape pops up with the restaurant's name above the building.

It's in the Details

Cote is working on practical engineering applications that require much more accuracy. But how could an engineer view what is beneath the ground or behind the wall of a building? Ideally, a field engineer would point a device at a building or other location and a variety of information would be loaded to the image. But determining accuracy is hard. "You'd need to know exactly the location of the smart phone, the x,y,z coordinates," says Cote. "Then, you'd have to know what is beneath the ground to be able to upload the data."

Global positioning systems or compass readings are a start; they are what commercial AR applications now rely on. But the information is only approximate, meaning augmentation is only approximate.

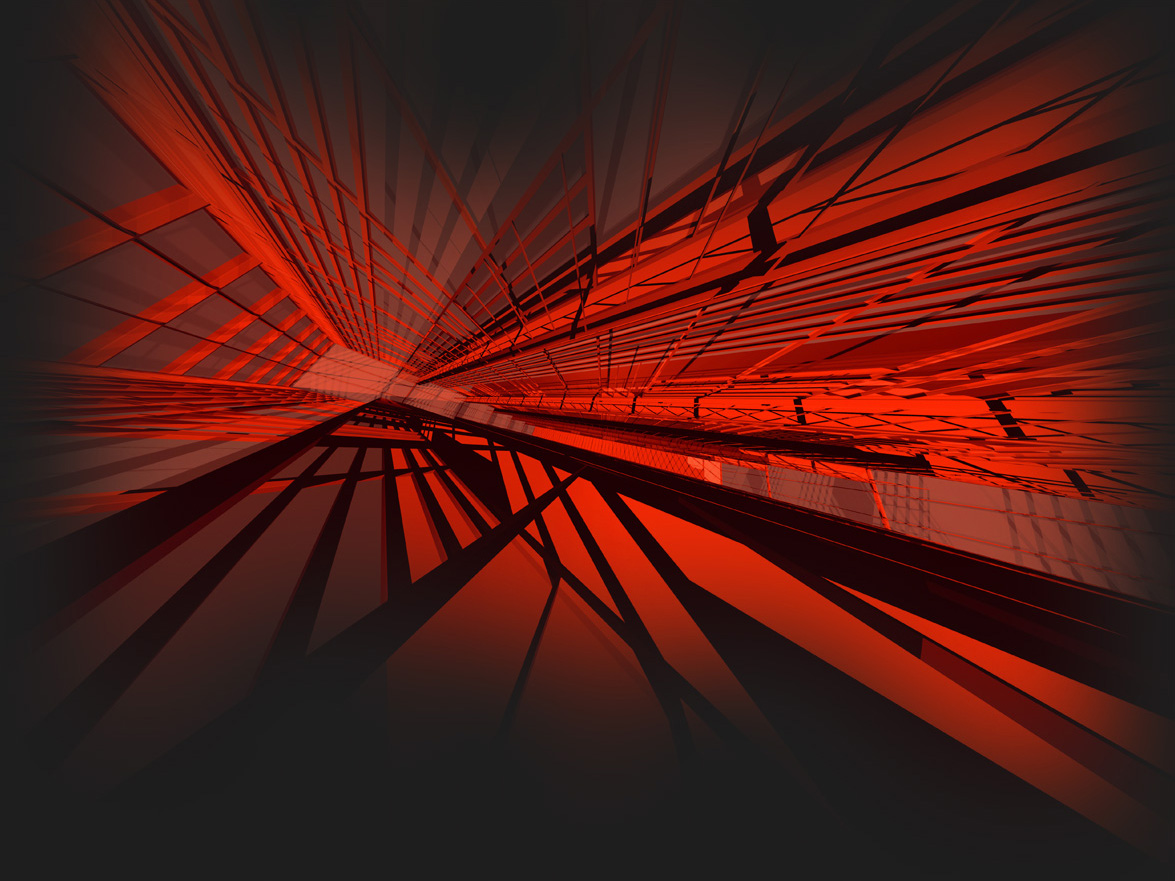

Underground pipes are identified by color and placed in "virtual excavation." A vertical slicing tool provides context. Image: Bentley Systems

Underground pipes are identified by color and placed in "virtual excavation." A vertical slicing tool provides context. Image: Bentley SystemsAs a workaround, Cote and his small team determined that panoramic images could be used, and linked with 3-D data. In an application devised for a mobile device with an orientation sensor and GPS, the system first gets the GPS location and uses it to download and display the closest panorama. Then, the system overlays the 3-D model to the panorama. The system simply gets the current tablet orientation from the orientation sensor and displays the panorama in the same orientation. Then, the user can use the tablet as an "aim and shoot" device: to get information about a specific object, the user aims at it with the device's crosshair, says Cote.

Workaround

Cote acknowledges the scheme is not true augmented reality, but it does offer a stable workaround. For underground utilities, he and his team devised a "virtual excavation" that places pipe, for instance, within it. Color coding and adding texture helps provide a 3-D view within the excavation, but it does little to determine an accurate distance between the pipes. That is important for workers seeking to fit new pipes within the existing grid, for instance. As a solution, Cote worked out a vertical slicing tool to display a 2-D vertical section of a model.

If what lays beneath the surface is unknown, Cote says new 3-D ground penetrating radar can be used to lay out a "picture" of what is beneath the ground. That reading can then be placed within a "virtual excavation" to put it into context for the operator.

Developing Drawings

For building construction, Cote is looking into integrating construction drawings into a virtual image. Engineers have long relied on drawings, and lots of them, to precisely plan and guide them through myriad tasks, including fabricating machinery, manufacturing products, and designing and building simple and complex structures, buildings, infrastructure as well as their operating systems. Especially for large projects, however, their complexity and volume can present problems for designers and builders in determining how a detail corresponds to the physical world on a building site.

The development of computer-generated 3-D models eases such problems, but viewing them on tablets and small devices in the field is difficult at best. And because drawings remain the legally approved document for construction, designers still have to remove one dimension from a 3-D model to create a 2-D drawing.

Cote's first attempt produced two options: A "sliding plane" technique that slides the drawing's plane at its exact location within a structure, to attract the user's attention by indicating the location of the drawing, and something similar to virtual excavation where a wall is cut, revealing the inside of the building model, showing the 2-D section drawing at exactly the location it represents, in a representation that retains full context.

Cote's work represents the first steps in development of a viable tool for engineers, he says. "I think we'll get to a serious augmented reality [tool] in the next few years," he says. "But there are complex problems. That's why this is all in the research field."

The potential for accuracy is great, but achieving it is extremely difficult.Stephane Cote, Bentley Systems